Main Difference

The main difference between Enthalpy and Entropy is that the Enthalpy is a measurement of energy in a thermodynamic system; thermodynamic quantity equivalent to the total heat content of a system and Entropy is a physical property of the state of a system, measure of disorder

-

Enthalpy

Enthalpy (listen), a property of a thermodynamic system, is equal to the system’s internal energy plus the product of its pressure and volume. In a system enclosed so as to prevent mass transfer, for processes at constant pressure, the heat absorbed or released equals the change in enthalpy.

The unit of measurement for enthalpy in the International System of Units (SI) is the joule. Other historical conventional units still in use include the British thermal unit (BTU) and the calorie.

Enthalpy comprises a system’s internal energy, which is the energy required to create the system, plus the amount of work required to make room for it by displacing its environment and establishing its volume and pressure.Enthalpy is a state function that depends only on the prevailing equilibrium state identified by the system’s internal energy, pressure, and volume. It is an extensive quantity.

Change in enthalpy (ΔH) is the preferred expression of system energy change in many chemical, biological, and physical measurements at constant pressure, because it simplifies the description of energy transfer. In a system enclosed so as to prevent matter transfer, at constant pressure, the enthalpy change equals the energy transferred from the environment through heat transfer or work other than expansion work.

The total enthalpy, H, of a system cannot be measured directly. The same situation exists in classical mechanics: only a change or difference in energy carries physical meaning. Enthalpy itself is a thermodynamic potential, so in order to measure the enthalpy of a system, we must refer to a defined reference point; therefore what we measure is the change in enthalpy, ΔH. The ΔH is a positive change in endothermic reactions, and negative in heat-releasing exothermic processes.

For processes under constant pressure, ΔH is equal to the change in the internal energy of the system, plus the pressure-volume work p ΔV done by the system on its surroundings (which is positive for an expansion and negative for a contraction). This means that the change in enthalpy under such conditions is the heat absorbed or released by the system through a chemical reaction or by external heat transfer. Enthalpies for chemical substances at constant pressure usually refer to standard state: most commonly 1 bar (100 kPa) pressure. Standard state does not, strictly speaking, specify a temperature (see standard state), but expressions for enthalpy generally reference the standard heat of formation at 25 °C (298 K).

The enthalpy of an ideal gas is a function of temperature only, so does not depend on pressure. Real materials at common temperatures and pressures usually closely approximate this behavior, which greatly simplifies enthalpy calculation and use in practical designs and analyses.

-

Entropy

In statistical mechanics, entropy is an extensive property of a thermodynamic system. It is closely related to the number Ω of microscopic configurations (known as microstates) that are consistent with the macroscopic quantities that characterize the system (such as its volume, pressure and temperature). Entropy expresses the number Ω of different configurations that a system defined by macroscopic variables could assume. Under the assumption that each microstate is equally probable, the entropy

S

{displaystyle S}

is the natural logarithm of the number of microstates, multiplied by the Boltzmann constant kB. Formally (assuming equiprobable microstates),

S

=

k

B

ln

Ω

.

{displaystyle S=k_{mathrm {B} }ln Omega .}

Macroscopic systems typically have a very large number Ω of possible microscopic configurations. For example, the entropy of an ideal gas is proportional to the number of gas molecules N. The number of molecules in 22.4 liters of gas at standard temperature and pressure is roughly 6.022 × 1023 (the Avogadro number).

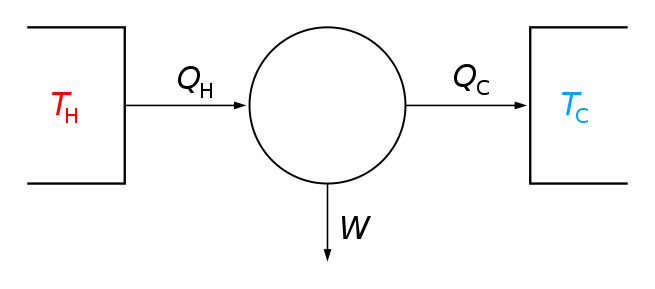

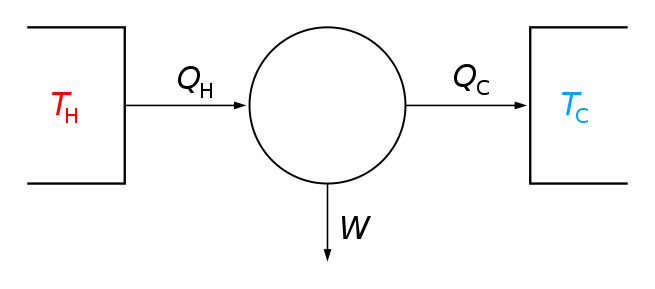

The second law of thermodynamics states that the entropy of an isolated system never decreases over time. Isolated systems spontaneously evolve towards thermodynamic equilibrium, the state with maximum entropy. Non-isolated systems, like organisms, may lose entropy, provided their environment’s entropy increases by at least that amount so that the total entropy either increases or remains constant. Therefore, the entropy in a specific system can decrease as long as the total entropy of the Universe does not. Entropy is a function of the state of the system, so the change in entropy of a system is determined by its initial and final states. In the idealization that a process is reversible, the entropy does not change, while irreversible processes always increase the total entropy.

Because it is determined by the number of random microstates, entropy is related to the amount of additional information needed to specify the exact physical state of a system, given its macroscopic specification. For this reason, it is often said that entropy is an expression of the disorder, or randomness of a system, or of the lack of information about it. The concept of entropy plays a central role in information theory.

-

Enthalpy (noun)

In thermodynamics, a measure of the heat content of a chemical or physical system.

” H = U + p V , where H is enthalpy, U is internal energy, p is pressure, and V is volume.”

-

Entropy (noun)

A measure of the amount of information and noise present in a signal.

-

Entropy (noun)

The tendency of a system that is left to itself to descend into chaos.